دومین نمایشگاه کاریابی فیزیک پیشگان کشور

با عرض سلام خدمت همکار عزیزمان از جامعه فیزیک ایران

احتراماً پوستر دومین نمایشگاه کاریابی فیزیک پیشگان کشور به پیوست ارسال می شود. نمایشگاه کاریابی فیزیک پیشگان ایران (2nd Iran’s Physics Job Fair) قرار است در 14 دیماه 1402 در دانشکده فیزیک دانشگاه تهران، همزمان با گردهمایی سراسری فیزیک ایران 1402 از ساعت 9 الی 17 برگزار شود.

حضور شما گرمی بخش این نمایشگاه خواهد بود.

همچنین حضور شرکتهای متقاضی جذب فیزیک پیشگان کشور مورد انتظار است. لطفا در این مهم به جامعه فیزیک کشور یاری برسانید. منتظریم.

لطفا به دانشجویان و دانش آموختگان جامعه فیزیک کشور جهت بازدید اطلاع رسانی نمایید.

حهت هرگونه سوال با کارشناس نمایشگاه آقای مسعود مظفری (09120673360) تماس حاصل فرمایید.

با تشکر

دبیر اجرایی نمایشگاه کاریابی فیزیک پیشگان ایران

دکتر محمدی زاده

اطلاعیه وبینار تخصصی فیزیک ماده چگال

به اطلاع می رساند که روز چهارشنبه 22 آذرماه 1402 میزبان پروفسور ریو مائزونو و دکتر توموهیرو ایچیبا از موسسه پیشرفته علوم وتکنولوژی ژاپن (JAIST) در دانشگاه شهید باهنر کرمان خواهیم بود.

پروفسور مائزونو سخنرانی خود را با عنوان "Recent Trend in Computational Materials Science Using Data Scientific Technique" ساعت 9:30 صبح و دکتر ایچیبا سخنرانی تحت عنوان "Structure Search on H2-PRE Phase of Solid Hydrogen: Diffusion Monte Carlo Study" ساعت 10:45 صبح برای علاقه مندان فیزیک ماده چگال ارائه خواهند داد.

از آنجایی که مقدمات ارائه آنلاین نیز فراهم گردیده است، خرسند خواهیم شد که در این وبینار حضور بهم رسانید.

خواهشمند است ترتیبات لازم جهت اطلاع رسانی مؤثر به همکاران، دانشجویان، و علاقه مندان در دانشکده فراهم آورید.

ضمنا، پوستر برنامه نیز ضمیمه ایمیل شده است.

لینک شرکت در وبینار: https://ocvc.uk.ac.ir/

با تشکر

محدثه عباس نژاد

عضو هیات علمی دانشکده فیزیک،

دانشگاه شهید باهنر کرمان

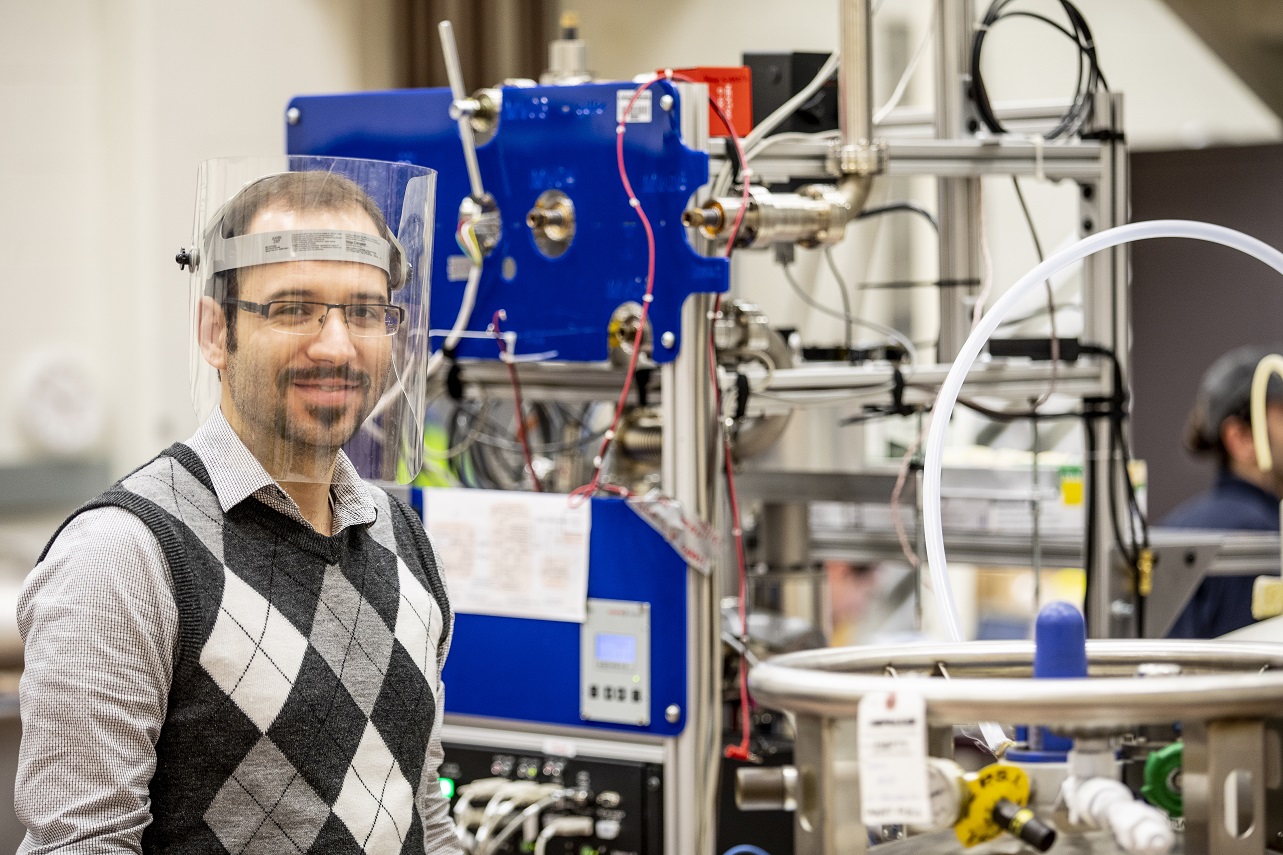

اندازهگیری جرم نوترینو به کمک تابش رادیویی الکترونها و همکاری پژوهشگر ایرانی در این پروژه

این پژوهش در مجله معتبر فیزیکال ریویو لِترز physical review letters به چاپ رسیده است.

اشتری دکتری فیزیک را از دانشگاه سیاتل در حوزه فیزیک ذرات دریافت کرده است. پیش از آن دوره کارشناسی ارشد خود را در دانشکده فیزیک دانشگاه صنعتی شریف و کارشناسی را در دانشگاه صنعتی اصفهان گذرانده است.

این موفقیت را خبرنامۀ علمی انجمن فیزیک به دکتر اشتری تبریک گفته و خبر زیر را که توسط ایشان نوشته شده است را در ذیل منعکس می کند.

***

نوترینوها یکی از ذرات پرشمار کیهاناند. با این وجود مقدار دقیق جرم این ذرات همچنان به صورت پرسشی بی پاسخ در فیزیک ذرات بنیادی مطرح است. شواهد تجربی نشان میدهد که جرم این ذرات حداقل ۶۰۰۰۰۰ برابر کوچکتر از جرم الکترونهاست. بر اساس مدل استاندارد ذرات بنیادی نوترینوها فرمیونهایی بدون جرم اند. اما مشاهدهی پدیدهی نوسان در نوترینوهای خورشیدی و جوی ثابت کرد که تصویر مدل استاندارد ذرات بنیادی ناقص است و این ذرات دارای جرمی غیر صفراند. یافتن جرم این ذرات نقشی کلیدی در فهم عمیقتر فیزیک ذرات بنیادی و کیهانشناسی دارد.

در روشی مرسوم برای اندازهگیری جرم نوترینو، پژوهشگران واپاشی بتای هستهی تریتیوم را مورد مطالعه قرار میدهند. انرژی این واپاشی میان الکترون و (آنتی) نوترینوی آزاد شده تقسیم میشود. با اندازهگیری بیشینهی انرژی الکترونها در این واپاشی میتوان کمینهی انرژی کل نوترینوها را به دست آورد و حدی برای جرم موثر نوترینوها یافت. کوچکترین حد بالای موجود بر روی جرم موثر نوترینو به کمک این روش برابر 0.8 eV/c2 است که در سال گذشته توسط آزمایشگاه کاترین (KATRIN) تعیین شد. کاترین با گذر دادن الکترونها از یک سد پتانسیل الکتریکی قابل تنظیم و آشکارسازی مستقیم الکترونها انرژی آنها را اندازهگیری میکند.

اکنون پژوهشگران گروه پروژهی ۸ (Project 8) برای اولین بار با کمک روشی غیرمستقیم موفق شدهاند طیف واپاشی بتای مولکول تریتیم را ثبت کنند. در این روش الکترون با قرار گرفتن در یک میدان مغناطیسی حرکتی مارپیچی را آغاز میکند و مقداری از انرژی خود را در این حرکت به صورت تابش رادیویی تابش میکند. انرژی الکترون به کمک اندازهگیری فرکانس این تابش قابل تعیین است.

گروه پروژهی ۸ موفق شد با کمک این روش و ساخت یک نمونهی اولیه با حجمی از مرتبه چند سانتیمتر مکعب حدی برابر 150 eV/c2 را بر روی جرم موثر نوترینو به دست آورد. این گروه امیدوار است که بتواند در فاز نهایی آزمایش با استفاده از نمونهای با حجمی از مرتبهی متر مکعب حساسیت آزمایش را برای اندازهگیری جرم نوترینو تا 0.04 eV/c2 افزایش دهد.

علی اشتری اصفهانی

سخنرانی دکتر تکوک با موضوع نظریه کوانتومی و تاثیر آن بر دیدگاه ما به هستی و تکنولوژی

«فیزیک پلاس:نظریه کوانتومی و تاثیر آن بر دیدگاه ما به هستی و تکنولوژی»

با سخنرانی:

دکتر محمد وحید تکوک

دکتر محمد وحید تکوکهیئت علمی دانشگاه خوارزمی

آزمایشگاه APC دانشگاه پاریس

به صورت هیبرید

سهشنبه ۱۴ آذر

سهشنبه ۱۴ آذر  ساعت ۱۲:۳۰

ساعت ۱۲:۳۰ مکان: دانشگاه خواجه نصیرالدین طوسی، پردیس رضایی نژاد

مکان: دانشگاه خواجه نصیرالدین طوسی، پردیس رضایی نژاد

مکان دقیق ارائه حضوری قبل از شروع برنامه اعلام میشود

مکان دقیق ارائه حضوری قبل از شروع برنامه اعلام میشود

برای شرکت به صورت مجازی از این لینک اقدام کنید.

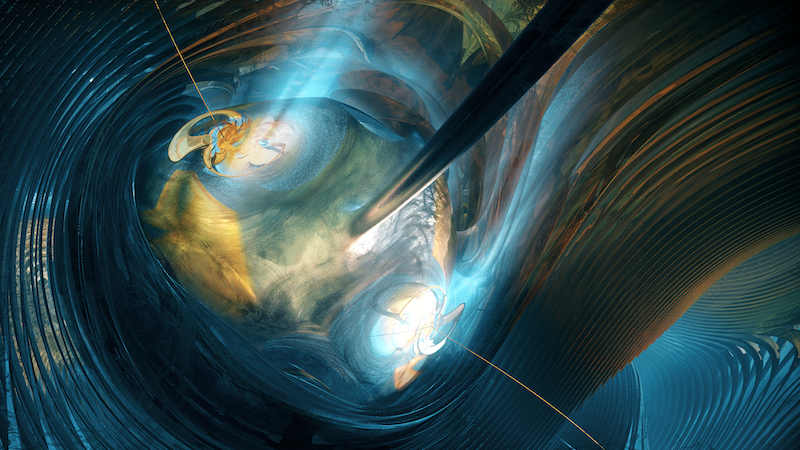

چرخ دنده کوانتومی با استفاده از شبکه نوری

چرخ دنده وسیله ای است که از یک نیروی محرکه دورهای (یا تصادفی)، برای جسم حرکت خالص رو به جلو ایجاد می کند. اگر چه چرخ دنده ها در ساعتها و سلولها رایج هستند (به: توقف موتور مولکولی مراجعه کنید)، اما ساختن آنها برای سیستمهای کوانتومی سخت است. اکنون محققان چرخ دنده ای کوانتومی برای مجموعه ای از اتم های سرد محبوس شده در یک شبکه نوری را نشان داده اند [1]. با تغییر میدانهای نوری شبکه به روشی وابسته به زمان، محققان نشان داده اند که میتوانند اتمها را به طور منسجم، بدون ایجاد اختلال در حالتهای کوانتومی اتمها، از یک مکان شبکه به مکان بعدی حرکت دهند.

یک نوع از چرخ دنده (چرخ دنده هامیلتونی) با ایجاد فشارهای دورهای و بدون تلفات به یک گاز، یا سیستم های چند ذره ای دیگر، کار می کند. برای ذراتی که در حالتهای اولیه خاصی شروع میشوند، فشارها با حرکتشان زمانبندی میشود و حرکت حاصل در یک جهت رو به جلوی خاص است. برای ذرات در حالتهای دیگر، فشارها هماهنگ نیستند و ذرات در مسیرهای آشفته و بدون جهت ترجیحی حرکت میکنند.

چرخ دنده های هامیلتونی قبلاً برای سیستمهای کوانتومی نشان داده شده بودند، اما برای آن چرخ دنده ها، ذرات نهایتا در فضا پخش میشدند. چرخ دنده ای که توسط دیوید گوری-ادلین David Guéry-Odelin از دانشگاه تولوز فرانسه و همکارانش طراحی شده است، کنترل جهت گیری دقیق تری دارد. برای این نمایش، محققان 105 اتم روبیدیوم را در پتانسیل تناوبی یک شبکه نوری قرار دادند. با اعمال مدولاسیونهای خاص تنظیمشده برای این پتانسیل، آنها نشان دادند که اتمها در مراحل مجزا از یک نقطه شبکه به نقطه دیگر حرکت میکنند. در پایان هر مرحله، اتم ها در حالت پایه خود آرام می گرفتند. گوری-ادلین می گوید که این انتقال خوش-تعریف می تواند کاربردهای بالقوهای در کنترل امواج ماده برای آزمایش های کوانتومی داشته باشد.

1- N. Dupont et al., “Hamiltonian ratchet for matter-wave transport,” Phys. Rev. Lett. 131, 133401 (2023).

منبع:

Quantum Ratchet Made Using an Optical Lattice

مرجع: https://www.psi.ir/news2_fa.asp?id=3957

اولین تصاویر از اقلیدس

این تلسکوپ فضایی که توسط آژانس فضایی اروپا ESA در اول ژوئیه سال میلادی جاری به فضا پرتاب شده است دارای آشکارساز 600 مگاپیکسلی است که در ناحیه مرئی و فروسرخ رصد می کند. اقلیدس که پس از مسافرتی یک ماهه به مقصد خود در نقطه لاگرانژی دو رسیده است، پیش بینی می شود که به مدت شش سال به مساحی کیهان بپردازد.

اقلیدس با نورسنجی و طیف سنجی از کهکشان ها تا انتقال به سرخ نزدیک به 2 قصد دارد درباره انبساط کیهان و تشکیل ساختار دانش ارزشمندی را در اختیار کیهان شناسان قرار دهد. یکی از اولین تصاویر منتشر شده که در این خبر آن را مشاهده می کنید؛ تصویر منتشر شده از اقلیدس از خوشه کهکشانی برساووش Perseus Cluster در صورت فلکی برساووش است. این تصویر از نظر قدرت تفکیک و میدان دیدِ رصد کم نظیر است. بیش از هزار کهکشان از این خوشه و صدهزار کهکشان در زمینه این تصویر دیده می شود. خوشه کهکشانی برساووش در فاصله حدود 240 میلیون سال نوری از ما قرار دارد و این اولین باری است که بعضی از اعضای کهکشانی کم نور این ساختار گرانشی را مشاهده می کنیم.

تصاویر اولیه از اقلیدس نویدبخش یافته های جدید از این تلسکوپ فضایی است.

منبع خبر و عکس: وبگاه ESA

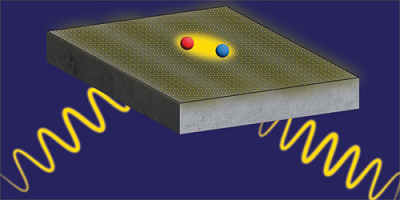

جفت شدگی قوی نور-ماده در ساده ترین هندسه

ساختارهایی که جفت شدگی قوی نور-ماده را به نمایش می گذارند، می توانند ویژگی های اپتیکی خود را با قرار گرفتن تحت تابش تغییر دهند که کاربردهای بالقوه بسیاری را در نانوفوتونیک و اپتوالکترونیک عرضه می کند. در میان امیدوارکننده ترین مواد برای ایجاد این ساختارها، دی کلکوژنایدهای فلز واسطه ((TMD تک لایه بر روی یک فیلم (لایه) نقره هستند. به طور معمول، این ساختار ناهمگن در میان یک میکرو کاواک احاطه میشود که قدرت جفت شدگی را به بهای افزایش پیچیدگی ساخت، بالا می برد. میکروکاواک همچنین میتواند دسترسی به TMD برای دستکاری پس از ساخت را محدود کند. اکنون نیکولاس زُرن مورالز Nicolas Zorn Morales در دانشگاه هومبولت برلین و همکارانش نشان داده اند که جفت شدگی قوی نور-ماده میتواند حتی بدون چنین میکروکاواکی به دست آید[1] .

پژوهشگران ساختار خود را با استفاده از دی سولفید تنگستن تک لایه ی رشد یافته به روش رسوب دهی فیزیکی بخار (physical vapor deposition) ساختند. اغلب آزمایشها روی TMD ها، از پولک های ورقه شده از کریستالهای حجیم، استفاده کرده اند که نوعا کوچکتر از 100 میکرومتر مربع هستند. روش رسوب دهی بخار در مقابل، نمونه هایی در مقیاس سانتی متر را موجب می شود. گروه، کیفیت بالای این تک لایه وسیع را در حین انتقال آن به فیلم نقره حفظ کردند که استفاده از بیضی سنجی بازتاب داخلی کلی (total internal reflection ellipsometry-TIRE) را به منظور آزمودن جفت شدگی نور-ماده ممکن ساخت. TIRE که معمولا برای کاربردهای زیست حسگری استفاده میشود، شامل تاباندن نور بر نمونه و اندازه گیری دامنه و تغییرات فاز مربوط به بازتاب میشود. تغییر فاز مستقیما بین محدوده های جفت شدگی قوی و ضعیف تمایز ایجاد میکند در حالی که تکنیک های استفاده شده در مطالعات پیشین میتواند نتایج مبهم داشته باشد.

گروه، "مخلوط mixing" القا شده بین نور و ترازهای انرژی ماده را در نمونه های خود 26 میلی الکترون ولت اندازه گیری کردند (مقادیر بالاتر جفت شدگی قویتر نور-ماده را نشان میدهد). این قدرت جفت شدگی حدودا نصف آن چیزی است که برای دستگاه هایی که از میکروکاواک ها استفاده می کنند گزارش شده اما هنوز در محدوده جفت شدگی قوی است. پژوهشگران می گویند که دستگاه های بسیار قابل تنظیم و ساده آنها، سکویی جدید برای طراحی مدولاتورهای پلاسمونیک، سوئیچ ها و سنسورها فراهم می کنند.

1. N. Zorn Morales et al., “Strong coupling of monolayer WS2 excitons and surface plasmon polaritons in a planar Ag/WS2 hybrid structure,” Phys. Rev. B 108, 165426 (2023).

منبع:

Strong Light–Matter Coupling in the Simplest Geometry

فراخوان عضویت و همکاری با شاخه بانوان انجمن فیزیک ایران

Measuring the Intensity of the World’s Most Powerful Lasers

Measuring the Intensity of the World’s Most Powerful Lasers

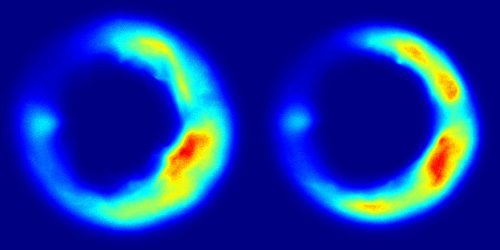

Scientists are building extremely powerful lasers. For around a trillionth of a second, one of these machines will emit thousands of times the power of the US electric grid. Such devices could allow researchers to explore unsolved problems related to fundamental physics principles and to develop innovative laser-based technologies. But these applications require precise knowledge of the intensity of any such laser, a parameter that is difficult to measure, as no known material can withstand the anticipated extreme conditions of the laser beams. Now Wendell Hill at the University of Maryland, College Park, and his colleagues demonstrate a technique that uses electrons to determine this intensity [1].

For their demonstration, the researchers fired a high-power laser pulse at a low-density gas, causing the gas to release electrons. The laser’s electromagnetic field then propelled these electrons forward and out of the laser beam. The team observed the angular distribution of the ejected electrons in real time using surfaces called image plates that act like photographic film.

Analyzing these image plates, Hill and his colleagues find that the angle of the emitted electrons relative to the beam’s direction is inversely proportional to a laser’s intensity, allowing this angle to serve as an intensity measure. The researchers demonstrate their method for laser intensities of 1019–1020 W/cm2 and suggest that it could be applied to intensities in the range of 1018–1021 W/cm2. They say that the approach could help scientists in field testing the next generation of ultrapowerful lasers and in studying, with high precision, the interaction between matter and strong electromagnetic fields.

–Ryan Wilkinson

Ryan Wilkinson is a Corresponding Editor for Physics Magazine based in Durham, UK.

References

- S. Ravichandran et al., “Imaging electron angular distributions to assess a full-power petawatt-class laser focus,” Phys. Rev. A 108, 053101 (2023).

جلسات هفتگی گروه علوم اطلاعات کوانتمی- پژوهشگاه دانشهای بنیادی

گروه «علوم اطلاعات کوانتمی» پژوهشگاه دانشهای بنیادی (IPM) از این هفته برنامه هفتگی خود را آغاز خواهد کرد. برنامه هفتگی شامل سمینارهای رسمی (دو هفتگی) و همچنین جلسات بحث عمدتا به صورت ژورنال کلاب (دو هفتگی) خواهد بود. جلسات حتی الامکان به صورت حضوری در محل پژوهشکده فیزیک، به همراه امکان شرکت غیرحضوری روی محیط اسکای روم

https://www.skyroom.online/ch/

برگزار خواهد شد.

برنامه این هفته: ژورنال کلاب

بحث درخصوص مقالات زیر

What exactly does Bekenstein bound?

https://arxiv.org/pdf/2309.

What if Quantum Gravity is "just" Quantum Information Theory?

https://arxiv.org/abs/2310.

زمان و مکان:

شنبه 20 آبان 1402

ساعت 14-16

کلاس A واقع در طبقه همکف ساختمان پژوهشکده فیزیک

شرکت در این جلسات برای عموم علاقمندان آزاد است.

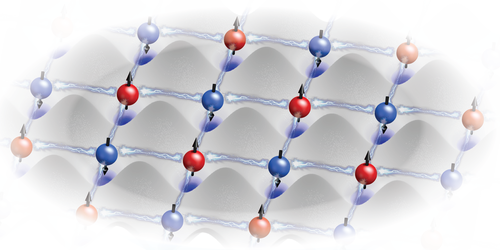

ابداع دو نوع جدید از ابررسانایی

الکترونیک آینده به کشف مواد منحصر به فرد بستگی دارد. با این وجود، بعضی اوقات توپولوژی طبیعی اتم ها ایجاد اثرات فیزیکی جدید را دشوار می کند. برای حل این مشکل، دانشمندانی از دانشگاه زوریخ سوییس اکنون با موفقیت ابررساناها را به صورت یک اتم در لحظه طراحی کرده اند که حالتهای جدید ماده را ایجاد می کند.

کامپیوترها در آینده چه شکلی خواهند بود؟ چگونه کار خواهند کرد؟ جستجو برای پاسخ به این پرسش ها محرک اصلی پژوهشهای فیزیکی پایه است. چندین سناریوی ممکن وجود دارد، از توسعه بیشتر الکترونیک کلاسیک تا محاسبات نورومورفیک و کامپیوترهای کوانتومی.

عنصر مشترک در همه این رویکردها این است که آنها بر اساس اثرات فیزیکی جدیدی هستند که برخی از آنها تاکنون فقط در تئوری پیش بینی شده اند. تلاش زیاد پژوهشگران و استفاده از ابزار پیشرفته در جستجو برای مواد کوانتومی جدید، آنها را قادر می سازد تا چنین اثراتی ایجاد نمایند.

رویکرد جدید به ابررسانایی

در پژوهش اخیر منتشر شده در مجله Nature Physics، گروه پژوهشی پروفسور تیتوس نئوپرت Titus Neupert در دانشگاه زوریخ(UZH) در همکاری نزدیک با فیزیکدانانِ موسسه ماکس پلانک- بخش فیزیک میکرو ساختار در هال آلمان، یک راه حل ممکن ارائه کردند. پژوهشگران مواد مورد نیاز را خودشان ایجاد کردند- یک اتم در لحظه.

محققان در حال تمرکز روی انواع جدید ابررساناها هستند که به ویژه چون در دماهای پایین مقاومت الکتریکی صفر از خود نشان می دهند، جالب هستند. این ابررساناها که گاهی با نام "دیامغناطیس های ایده آل" شناخته می شوند، در بسیاری از کامپیوترهای کوانتومی به دلیل بر هم کنش های غیرعادی آنها با میدان های مغناطیسی استفاده می شوند. فیزیکدانان تئوری سالها وقت صرف تحقیق و پیش بینی حالتهای ابررسانایی مختلف کرده اند. پروفسور نئوپرت می گوید:"با این حال، تاکنون فقط تعداد کمی به طور قطعی در مواد نشان داده شده اند."

دو نوع جدید از ابررسانایی

در همکاری هیجان انگیز آنها، پژوهشگرانِ UZH به صورت تئوری پیش بینی کردند که اتم ها چگونه باید تنظیم شوند تا یک فاز ابررسانایی جدید ایجاد کنند و سپس گروه دیگر در آلمان، آزمایشها را به منظور پیاده سازی توپولوژی مربوط انجام دادند. با استفاده از یک میکروسکوپ تونلی روبشی، آنها اتمها را جابه جا کرده و با دقت اتمی در مکان صحیح نشاندند.

همین روش همچنین برای اندازه گیری ویژگی های مغناطیسی و ابررسانایی سیستم به کار گرفته شد. با نشاندن اتمهای کروم روی سطح نیوبیوم ابررسانا، پژوهشگران توانستند دو نوع جدید از ابررسانایی را ایجاد کنند. روشهای مشابهی قبلا برای دستکاری اتمها و مولکولهای فلزی استفاده شده بود، اما تاکنون هرگز امکان ساخت ابررساناهای دو بعدی با این روش ممکن نشده است.

نتایج نه تنها پیش بینی های تئوری فیزیکدانها را تایید می کنند، بلکه به آنها دلیلی می دهند تا درباره اینکه چه حالتهای جدید دیگری از ماده میتوانند به این روش ایجاد شوند و چگونه میتوانند در کامپیوترهای کوانتومی آینده مورد استفاده قرار گیرند، گمانه زنی کنند.

“Two-dimensional Shiba lattices as a possible platform for crystalline topological superconductivity” by Martina O. Soldini, Felix Küster, Glenn Wagner, Souvik Das, Amal Aldarawsheh, Ronny Thomale, Samir Lounis, Stuart S. P. Parkin, Paolo Sessi and Titus Neupert, 10 July 2023, Nature Physics. DOI: 10.1038/s41567-023-02104-5

منبع:

Creating New States of Matter – Researchers Invent Two New Types of Superconductivity

منبع خبر: https://www.psi.ir/news2_fa.asp?id=3947

Breakthrough Prize for Quantum Field Theorists

Breakthrough Prize for Quantum Field Theorists

• Physics 16, 165The 2024 Breakthrough Prize in Fundamental Physics goes to John Cardy and Alexander Zamolodchikov for their work in applying field theory to diverse problems.

peterschreiber.media/stock.adobe.com

Many physicists hear the words “quantum field theory,” and their thoughts turn to electrons, quarks, and Higgs bosons. In fact, the mathematics of quantum fields has been used extensively in other domains outside of particle physics for the past 40 years. The 2024 Breakthrough Prize in Fundamental Physics has been awarded to two theorists who were instrumental in repurposing quantum field theory for condensed-matter, statistical physics, and gravitational studies.

“I really want to stress that quantum field theory is not the preserve of particle physics,” says John Cardy, a professor emeritus from the University of Oxford. He shares the Breakthrough Prize with Alexander Zamolodchikov from Stony Brook University, New York.

The Breakthrough Prize is perhaps the “blingiest” of science awards, with $3 million being given for each of the five main awards (three for life sciences, one for physics, and one for mathematics). Additional awards are given to early-career scientists. The founding sponsors of the Breakthrough Prize are entrepreneurs Sergey Brin, Priscilla Chan and Mark Zuckerberg, Julia and Yuri Milner, and Anne Wojcicki.

The fundamental physics prize going to Cardy and Zamolodchikov is “for profound contributions to statistical physics and quantum field theory, with diverse and far-reaching applications in different branches of physics and mathematics.” When notified about the award, Zamolodchikov expressed astonishment. “I never thought to find myself in this distinguished company,” he says. He was educated as a nuclear engineer in the former Soviet Union but became interested in particle physics. “I had to clarify for myself the basics.” The basics was quantum field theory, which describes the behaviors of elementary particles with equations that are often very difficult to solve. In the early 1980s, Zamolodchikov realized that he could make more progress in a specialized corner of mathematics called two-dimensional conformal field theory (2D CFT). “I was lucky to stumble on this interesting situation where I could find exact solutions,” Zamolodchikov says.

CFT describes “scale-invariant” mappings from one space to another. “If you take a part of the system and blow it up by the right factor, then that part looks like the whole in a statistical sense,” explains Cardy. More precisely, conformal mappings preserve the angles between lines as the lines stretch or contract in length. In certain situations, quantum fields obey this conformal symmetry. Zamolodchikov’s realization was that solving problems in CFT—especially in 2D where the mathematics is easiest—gives a starting point for studying generic quantum fields, Cardy says.

Cardy started out as a particle physicist, but he became interested in applying quantum fields to the world beyond elementary particles. When he heard about the work of Zamolodchikov and other scientists in the Soviet Union, he immediately saw the potential and versatility of 2D CFT. One of the first places he applied this mathematics was in phase transitions, which arise when, for example, the atomic spins of a material suddenly align to form a ferromagnet. Within the 2D CFT framework, Cardy showed that you could perform computations on small systems—with just ten spins, for example—and extract information that pertains to an infinitely large system. In particular, he was able to calculate the critical exponents that describe the behavior of various phase transitions.

Cardy found other uses of 2D CFT in, for example, percolation theory and quantum spin chains. “I would hope people consider my contributions as being quite broad, because that’s what I tried to be over the years,” he says. Zamolodchikov also explored the application of quantum field theory in diverse topics, such as critical phenomena and fluid turbulence. “I tried to develop it in many respects,” he says. The two theorists never collaborated, but they both confess to admiring the other’s work. “We’ve written papers on very similar things,” Cardy says. “I would say that we have a friendly rivalry.” He remembers first encountering Zamolodchikov in 1986 at a conference organized in Sweden as a “neutral” meeting point for Western and Soviet physicists. “It was wonderful to meet him and his colleagues for the first time,” Cardy says.

“Zamolodchikov and Cardy are the oracles of two dimensions,” says Pedro Vieira, a quantum field theorist from the Perimeter Institute in Canada. He says that one of the things that Zamolodchikov showed was the infinite number of symmetries that can exist in 2D CFT. Cardy was especially insightful in how to apply the mathematical insights of 2D to other dimensions. Vieira says of the pair, “They understood the power of 2D physics, in that it is very simple and elegant, and at the same time mathematically rich and complex.”

Vieira says that the work of Zamolodchikov and Cardy continues to be important for a wide range of researchers, including condensed-matter physicists who study 2D surfaces and string theorists who model the motion of 1D strings moving in time. One topic attracting a lot of attention these days is the so-called AdS/CFT correspondence, which connects CFT mathematics with gravitational theory (see Viewpoint: Are Black Holes Really Two Dimensional?). Cardy says that there’s also been a great deal of recent work on CFT in dimensions more than two. “I’m sure that [higher-dimensional CFT] will win lots of awards in the future,” he says. Zamolodchikov continues to work on extensions of quantum field theory, such as the “ ��¯ deformation,” that may provide insights into fundamental physics, just as CFT has done.

Zamolodchikov and Cardy and the 18 other Breakthrough winners will receive their awards on April 13, 2024, at a red-carpet ceremony that routinely attracts celebrities from film, music, and sports. Cardy says that he is looking forward to it. “I like a good party.”

–Michael Schirber

Michael Schirber is a Corresponding Editor for Physics Magazine based in Lyon, France.

REFERENCE: https://physics.aps.org/articles/v16/165?utm_campaign=weekly&utm_medium=email&utm_source=emailalert

Quantum Ratchet Made Using an Optical Lattice

Quantum Ratchet Made Using an Optical Lattice

Researchers have turned an optical lattice into a ratchet that moves atoms from one site to the next.

A ratchet is a device that produces a net forward motion of an object from a periodic (or random) driving force. Although ratchets are common in watches and in cells (see Focus: Stalling a Molecular Motor), they are hard to make for quantum systems. Now researchers demonstrate a quantum ratchet for a collection of cold atoms trapped in an optical lattice [1]. By varying the lattice’s light fields in a time-dependent way, the researchers show that they can move the atoms coherently from one lattice site to the next without disturbing the atoms’ quantum states.

One type of ratchet (a Hamiltonian ratchet) works by providing periodic, nonlossy pushes to a gas or other multiparticle system. For particles starting in certain initial states, the pushes are timed with their motion, and the resulting movement is in a particular forward direction. For particles in other states, the pushes are out of sync, and the particles travel in chaotic trajectories with no preferred direction.

Hamiltonian ratchets have previously been demonstrated for quantum systems, but for those ratchets the particles ended up spread out in space. The ratchet designed by David Guéry-Odelin from the University of Toulouse, France, and his colleagues has tighter directional control. For the demonstration, the researchers placed 105 rubidium atoms in the periodic potential of an optical lattice. Applying specially tuned modulations to this potential, they showed that the atoms moved in discrete steps from one lattice site to the next. At the end of each step, the atoms came to rest in their ground state. This well-defined transport could have potential applications in controlling matter waves for quantum experiments, Guéry-Odelin says.

–Michael Schirber

Michael Schirber is a Corresponding Editor for Physics Magazine based in Lyon, France.

References

- N. Dupont et al., “Hamiltonian ratchet for matter-wave transport,” Phys. Rev. Lett. 131, 133401 (2023).

How AI and ML Will Affect Physics

How AI and ML Will Affect Physics

- Department of Physics, University of Maryland, College Park, College Park, MD

• Physics 16, 166

The more physicists use artificial intelligence and machine learning, the more important it becomes for them to understand why the technology works and when it fails.

The advent of ChatGPT, Bard, and other large language models (LLM) has naturally excited everybody, including the entire physics community. There are many evolving questions for physicists about LLMs in particular and artificial intelligence (AI) in general. What do these stupendous developments in large-data technology mean for physics? How can they be incorporated in physics? What will be the role of machine learning (ML) itself in the process of physics discovery?

Before I explore the implications of those questions, I should point out there is no doubt that AI and ML will become integral parts of physics research and education. Even so, similar to the role of AI in human society, we do not know how this new and rapidly evolving technology will affect physics in the long run, just as our predecessors did not know how transistors or computers would affect physics when the technologies were being developed in the early 1950s. What we do know is that the impact of AI/ML on physics will be profound and ever evolving as the technology develops.

The impact is already being felt. Just a cursory search of Physical Review journals for “machine learning” in articles’ titles, abstracts, or both returned 1456 hits since 2015 and only 64 for the entire period from Physical Review’s debut in 1893 to 2014! The second derivative of ML usage in articles is also increasing. The same search yielded 310 Physical Review articles in 2022 with ML in the title, abstract, or both; in the first 6 months of 2023, there are already 189 such publications.

ML is already being used extensively in physics, which is unsurprising since physics deals with data that are often very large, as is the case in some high-energy physics and astrophysics experiments. In fact, physicists have been using some forms of ML for a long time, even before the term ML became popular. Neural networks—the fundamental pillars of AI—also have a long history in theoretical physics, as is apparent from the fact that the term “neural networks” appears in hundreds of Physical Review articles’ titles and abstract since its first usage in 1985 in the context of models for understanding spin glasses. The AI/ML use of neural networks is quite different from the way neural networks appear in spin glass models, but the basic idea of representing a complex system using neural networks is shared by both cases. ML and neural networks have been woven into the fabric of physics going back 40 years or more.

What has changed is the availability of very large computer clusters with huge computing power, which enable ML to be applied in a practical manner to many physical systems. For my field, condensed-matter physics, these advances mean that ML is being increasingly used to analyze large datasets involving materials properties and predictions. In these complex situations, the use of AI/ML will become a routine tool for every professional physicist, just like vector calculus, differential geometry, and group theory. Indeed, the use of AI/ML will soon become so widespread that we simply will not remember why it was ever a big deal. At that point, this opinion piece of mine will look a bit naive, much like pontifications in the 1940s about using computers for doing physics.

But what about deeper usage of AI/ML in physics beyond using it as an everyday tool? Can they help us solve deep problems of great significance? Could physicists, for example, have used AI/ML to come up with the Bardeen-Cooper-Schrieffer theory of superconductivity in 1950 if they had been available? Can AI/ML revolutionize doing theoretical physics by finding ideas and concepts such as the general theory of relativity or the Schrödinger equation? Most physicists I talk to firmly believe that this would be impossible. Mathematicians feel this way too. I do not know of any mathematician who believes that AI/ML can prove, say, Riemann’s hypothesis or Goldbach’s conjecture. I, on the other hand, am not so sure. All ideas are somehow deeply rooted in accumulated knowledge, and I am unwilling to assert that I already know what AI/ML won’t ever be able to do. After all, I remember the time when there was a widespread feeling that AI could never beat the great champions of the complex game of Go. A scholarly example is the ability of DeepMind’s AlphaFold to predict what structure a protein’s string of amino acids will adopt, a feat that was thought impossible 20 years ago.

This brings me to my final point. Doing physics using AI/ML is happening, and it will become routine soon. But what about understanding the effectiveness of AI/ML and of LLMs in particular? If we think of a LLM as a complex system that suddenly becomes extremely predictive after it has trained on a huge amount of data, the natural question for a physicist to ask is what is the nature of that shift? Is it a true dynamical phase transition that occurs at some threshold training point? Or is it just the routine consequence of interpolations among known data, which just work empirically, sometimes even when extrapolated? The latter, which is what most professional statisticians seem to believe, involves no deep principle. But the former involves what could be called the physics of AI/ML and constitutes in my mind the most important intellectual question: Why does AI/ML work and when does it fail? Is there a phase transition at some threshold where the AI/ML algorithm simply predicts everything correctly? Or is the algorithm just some huge interpolation, which works because the amount of data being interpolated is so gigantic that most questions simply fall within its regime of validity? As physicists, we should not just be passive users of AI/ML but also dig into these questions. To paraphrase a famous quote from a former US president, we should not only ask what AI/ML can do for us (a lot actually), but also what we can do for AI/ML.

About the Author

Sankar Das Sarma is the Richard E. Prange Chair in Physics and a Distinguished University Professor at the University of Maryland, College Park. He is also a fellow of the Joint Quantum Institute and the director of the Condensed Matter Theory Center, both at the University of Maryland. Das Sarma received his PhD from Brown University, Rhode Island. He has belonged to the University of Maryland physics faculty since 1980. His research interests are condensed-matter physics, quantum computation, and statistical mechanics.

برندگان نوبل فیزیک ۲۰۲۳ معرفی شدند.

به گزارش ایسنا و به نقل از نوبل پرایز، جایزه نوبل فیزیک ۲۰۲۳ به «پیر آگوستینی»، «فرنس کراوس» و «آن لوهولیر» برای پژوهش در مورد «روشهای تجربی که پالسهای اتوثانیهای نور را برای مطالعه دینامیک الکترون در ماده تولید میکنند» اهدا شد.

ثبت کوتاه ترین لحظات ممکن میشود

سه برنده جایزه نوبل فیزیک ۲۰۲۳ به خاطر آزمایشهایی شناخته میشوند که راههای جدیدی را برای کاوش در دنیای الکترونهای درون اتمها و مولکولها در اختیار بشریت گذاشتهاند. «پیر آگوستینی»(Pierre Agostini)، «فرنس کراوس»(Ferenc Krausz) و «آن لوهیلیر»(Anne L’Huillier) راهی را برای ایجاد پالسهای بسیار کوتاه نور نشان دادهاند که میتوان از آنها برای اندازهگیری کردن فرآیندهای سریعی استفاده کرد که طی آنها الکترونها حرکت میکنند یا انرژی را تغییر میدهند.

وقایع سریع به دنبال یکدیگر رخ میدهند و درست مانند فیلمی هستند که از تصاویر ثابت تشکیل شده است و با حرکت مداوم درک میشود. اگر بخواهیم رویدادهای واقعا کوتاه را بررسی کنیم، به فناوری ویژهای نیاز داریم. در دنیای الکترونها، تغییرات در چند دهم اتوثانیه اتفاق میافتند که بسیار کوتاه است.

آزمایشهای برندگان نوبل فیزیک ۲۰۲۳، پالسهای نوری را به قدری کوتاه تولید کردهاند که در اتوثانیه اندازهگیری میشوند. بنابراین، آزمایشهای آنها نشان میدهند که این پالسها را میتوان برای ارائه کردن تصاویری از فرآیندهای درون اتمها و مولکولها استفاده کرد.

در سال ۱۹۸۷، لوهیلیر دریافت هنگامی که نور لیزر فروسرخ را از طریق یک گاز نجیب منتقل میکند، بسیاری از «اورتونهای»(overtone) گوناگون نور پدید میآیند. هر اورتون، یک موج نور به همراه تعداد چرخههای معین برای هر چرخه در نور لیزر است. چرخهها در اثر تعامل نور لیزر با اتمهای موجود در گاز ایجاد میشوند و به برخی از الکترونها انرژی اضافی میدهند که سپس به صورت نور ساطع میشود. لوهیلیر به اکتشاف در مورد این پدیده ادامه داده و زمینه را برای پیشرفتهای بعدی فراهم کرده است.

در سال ۲۰۰۱، آگوستینی موفق شد به تولید و بررسی یک مجموعه پالسهای نوری متوالی بپردازد که در آن، هر پالس فقط ۲۵۰ اتوثانیه طول میکشید. در همان زمان، کراوس در حال کار کردن روی آزمایش دیگری بود که جداسازی یک پالس نوری به طول ۶۵۰ اتوثانیه را ممکن کرد.

مشارکت برندگان این جایزه، امکان بررسی فرآیندهای بسیار سریعی را فراهم کرده است که پیشتر پیگیری آنها غیرممکن بود.

«اوا اولسون»(Eva Olsson) رئیس کمیته نوبل در حوزه فیزیک گفت: اکنون میتوانیم دری را به روی دنیای الکترونها باز کنیم. فیزیک اتوثانیه به ما این فرصت را میدهد تا مکانیسمهایی را که توسط الکترونها کنترل میشوند، درک کنیم. گام بعدی، استفاده کردن از آنها خواهد بود.

این پدیده، کاربردهای بالقوهای را در زمینههای گوناگون دارد. برای مثال، در حوزه الکترونیک، درک و کنترل کردن نحوه رفتار الکترونها در یک ماده مهم است. پالسهای اتوثانیه را میتوان برای شناسایی کردن مولکولهای گوناگون در حوزههای متفاوت، از جمله در تشخیصهای پزشکی استفاده کرد.

محل کار کنونی برندگان نوبل فیزیک ۲۰۲۳ براساس وبسایت نوبل پرایز:

پیر آگوستینی. «دانشگاه ایالتی اوهایو»(OSU) در کلمبوس آمریکا

فرنس کراوس. «موسسه اپتیک کوانتومی ماکس پلانک»(MPQ)، «دانشگاه لودویگ ماکسیمیلیان مونیخ»(LMU)

آن لوهیلیر. «دانشگاه لوند»(Lund University)

First Light for a Next-Generation Light Source

First Light for a Next-Generation Light Source

X-ray free-electron lasers (XFELs) first came into existence two decades ago. They have since enabled pioneering experiments that “see” both the ultrafast and the ultrasmall. Existing devices typically generate short and intense x-ray pulses at a rate of around 100 x-ray pulses per second. But one of these facilities, the Linac Coherent Light Source (LCLS) at the SLAC National Accelerator Laboratory in California, is set to eclipse this pulse rate. The LCLS Collaboration has now announced “first light” for its upgraded machine, LCLS-II. When it is fully up and running, LCLS-II is expected to fire one million pulses per second, making it the world’s most powerful x-ray laser.

The LCLS-II upgrade signifies a quantum leap in the machine’s potential for discovery, says Robert Schoenlein, the LCLS’s deputy director for science. Now, rather than “demonstration” experiments on simple, model systems, scientists will be able to explore complex, real-world systems, he adds. For example, experimenters could peer into biological systems at ambient temperatures and physiological conditions, study photochemical systems and catalysts under the conditions in which they operate, and monitor nanoscale fluctuations of the electronic and magnetic correlations thought to govern the behavior of quantum materials.

The XFEL was first proposed in 1992 to tackle the challenge of building an x-ray laser. Conventional laser schemes excite large numbers of atoms into states from which they emit light. But excited states with energies corresponding to x-ray wavelengths are too short-lived to build up a sizeable excited-state population. XFELs instead rely on electrons traveling at relativistic speed through a periodic magnetic array called an undulator. Moving in a bunch, the electrons wiggle through the undulator, emitting x-ray radiation that interacts multiple times with the bunch and becomes amplified. The result is a bright x-ray beam with laser coherence.

The first XFEL was built in Hamburg, Germany, in 2005. Today that XFEL emits “soft” x-ray radiation, which has wavelengths as short as a few nanometers. LCLS switched on four years later and expanded XFEL’s reach to the much shorter wavelengths of “hard” x rays, which are essential to atomic-resolution imaging and diffraction experiments. These and other facilities that later appeared in Japan, Italy, South Korea, Germany, and Switzerland have enabled scientists to probe catalytic reactions in real time, solve the structures of hard-to-crystallize proteins, and shed light on the role of electron–photon coupling in high-temperature superconductors. The ability to record movies of the dynamics of molecules, atoms, and even electrons also became possible because x-ray pulses can be as short as a couple of hundred attoseconds.

The upgrades to LCLS offer a new mode of XFEL operation, in which the facility delivers an almost continuous x-ray beam in the form of a megahertz pulse train. For the original LCLS, the pulse rate, which maxed out at 120 Hz, was set by the properties of the linear accelerator that produced the relativistic electrons. Built out of copper, a conventional metal, and operated at room temperatures, the accelerator had to be switched on an off 120 times per second to avoid heat-induced damage. In LCLS-II some of the copper has been replaced with niobium, which is superconducting at the operating temperature of 2 K. Bypassing the damage limitations of copper, the dissipationless superconducting elements allow an 8000-fold gain in the maximum repetition rate. The new superconducting technology is also expected to reduce “jitter” in the beam, says LCLS director Michael Dunne. Greater stability and reproducibility, higher repetition rate, and increased average power “will transform our ability to look at a whole range of systems,” he adds.

LCLS-II is a boon for time-resolved chemistry-focused experiments, says Munira Khalil, a physical chemist at the University of Washington in Seattle. Khalil, a user of LCLS, plans to take advantage of the photon bounty of the rapid-fire pulses to perform dynamical experiments. She hopes such experiments may fulfill “a chemist’s dream”: real-time observations of the coupled motion of atoms and electrons. With extra photons, scientists could also probe dilute samples, potentially shedding light on how metals bind to specific sites in proteins—a process relevant to the function of half of all of nature’s proteins.

The megahertz pulse rate also means that experiments that previously took days to perform could now be completed in hours or minutes, says Henry Chapman of the Center for Free Electron Laser Science at DESY, Germany. At LCLS and later at Hamburg’s XFEL, Chapman ran pioneering experiments to determine the structures of proteins. The method he used, called serial crystallography, involves merging the diffraction patterns of multiple samples sequentially injected into the XFEL’s beam. Serial crystallography has allowed scientists to determine the structures of biologically relevant proteins that form crystals too small to study with conventional crystallography techniques. Chapman says that the increased throughput enabled by LCLS-II will permit much more ambitious experiments, such as measurements of biomolecular reactions on timescales from femtoseconds to milliseconds. “One could also think of an ‘on the fly’ analysis that feeds back into the experiment to discover optimal conditions for drug binding or catalysis,” he says.

For Khalil, the dramatic speedup of the experiments is a key advance of LCLS-II, as she thinks it will make these kinds of experiments accessible to a wider group of people. Until now, she says, XFEL facilities were mostly used by people who had the opportunity to work extensively at XFELs as postdocs or graduate students. Many more experimenters should now be able to enter the XFEL arena, she says. “It’s an exciting time for the field.”

–Matteo Rini

Matteo Rini is the Editor of Physics Magazine.

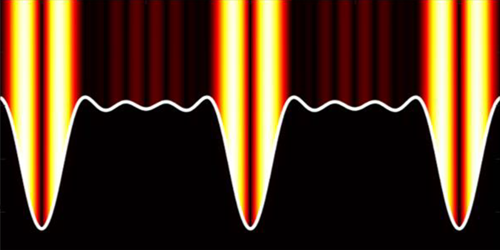

Metallic Gratings Produce a Strong Surprise

Metallic Gratings Produce a Strong Surprise

Using a metallic grating and infrared light, researchers have uncovered a light–matter coupling regime where the local coupling strength can be 3.5 times higher than the global average for the material.

When light and matter interact, quasiparticles called polaritons can form. Polaritons can change a material’s chemical reactivity or its electronic properties, but the details behind how those changes occur remain unknown. A new finding by Rakesh Arul and colleagues at the University of Cambridge could help change that [1, 2]. The team has uncovered an interaction regime where the light–matter coupling strength significantly exceeds that seen in previous experiments. Arul says that the discovery could improve researchers’ understanding of how polaritons induce material changes and could allow the exploration of a wider range of these quasiparticles.

Light–matter coupling experiments are typically performed using molecules trapped in an optical cavity. Researchers have explored the coupling of such systems to visible and microwave radiation, but probing the wavelengths in between has been tricky because of difficulties in detecting polaritons created using infrared light. Midinfrared wavelengths are interesting because they correspond to the frequencies of molecular vibrations, which can control a material’s optoelectronic properties.

To study this regime, Arul and his colleagues developed a technique to shift the wavelengths of midinfrared polaritons to the visible range, where detectors can better pick them up. Rather than molecules in a cavity, they coupled light to molecules atop a metallic grating. When irradiated with a visible laser beam, polaritons generated by the infrared radiation imprinted a signal on the scattered visible light, allowing polariton detection.

Using their technique, the team found a light–matter coupling strength at specific locations that was 350% higher than the average for the whole system. Researchers have been exploring the interaction of light with metallic gratings since the time of Lord Rayleigh, Arul says. “The system is still delivering surprises.”

–Katherine Wright

Katherine Wright is the Deputy Editor of Physics Magazine.

References

- R. Arul et al., “Raman probing the local ultrastrong coupling of vibrational plasmon polaritons on metallic gratings,” Phys. Rev. Lett. 131, 126902 (2023).

- M. S. Rider et al., “Theory of strong coupling between molecules and surface plasmons on a grating,” Nanophotonics 11, 3695 (2022).

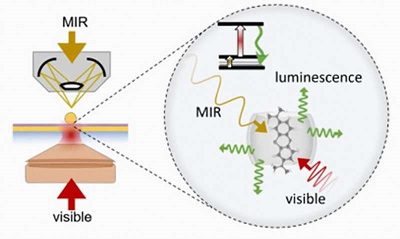

مرئی سازی نور مادون قرمز میانی در دمای اتاق

لومینسانس MIR با کمک ارتعاش (MIR Vibrationally-Assisted Luminescence: MIRVAL)

پژوهشگران دانشگاه های بیرمنگام و کمبریج با استفاده از سیستمهای کوانتومی روش جدیدی را برای تشخیص نور مادون قرمز میانی (MIR) در دمای اتاق توسعه دادهاند.

این تحقیق که در Nature Photonics منتشر شده است، در آزمایشگاه کاوندیش(Cavendish) در دانشگاه کمبریج انجام شده و نشان دهنده پیشرفت قابل توجهی در توانایی دانشمندان برای به دست آوردن بینش در مورد عملکرد مولکول های شیمیایی و بیولوژیکی است.

این گروه در روشی جدید با استفاده از سیستمهای کوانتومی، فوتونهای کمانرژی MIR را از طریق گسیلکنندههای مولکولی به فوتونهای مرئی پرانرژی تبدیل کردند. نوآوری جدید این قابلیت را دارد که به دانشمندان در شناسایی MIR و انجام طیفسنجی در سطح تک مولکولی و در دمای اتاق کمک کند.

دکتر روهیت چیکارادی (Rohit Chikkaraddy)، استادیار دانشگاه بیرمنگام و نویسنده اصلی این تحقیق توضیح می دهد: پیوندهایی که فاصله بین اتم ها را در مولکول ها حفظ می کنند، می توانند مانند فنر ارتعاش کنند و این ارتعاشات در فرکانس های بسیار بالا تشدید می شوند. این چشمه ها را می توان با نور ناحیه مادون قرمز میانی برانگیخت که برای چشم انسان نامرئی است.

در دمای اتاق، این فنرها در حرکت تصادفی هستند که به این معنی است که یک چالش بزرگ در تشخیص نور مادون قرمز میانی، اجتناب از این نویز حرارتی است. آشکارسازهای مدرن به دستگاه های نیمه هادی سرد شده متکی هستند که انرژی بر و حجیم هستند، اما تحقیقات ما روشی جدید و هیجانانگیز برای تشخیص این نور در دمای اتاق ارائه میکند.

رویکرد جدید لومینسانسِ MIR با کمک ارتعاش (MIRVAL) نامیده میشود و از مولکولهایی استفاده میکند که هم قابلیت MIR و هم نور مرئی را دارند. این تیم قادر به جمع آوری تابشگرهای مولکولی در یک حفره پلاسمونیک بسیار کوچک بود که هم در محدوده MIR و هم در محدوده مرئی تشدید می شد. آنها سپس آن را طوری مهندسی کردند که حالتهای ارتعاشی مولکولی و حالتهای الکترونیکی بتوانند برهمکنش داشته باشند، که منجر به انتقال کارآمد نور MIR به لومینسانس (تابناکی مرئی luminescence) تقویتشده میشود.

دکتر چیکارادی ادامه داد: چالش برانگیزترین جنبه، گرد هم آوردن سه مقیاس طول بسیار متفاوت - طول موج مرئی که چند صد نانومتر است، ارتعاشات مولکولی که کمتر از یک نانومتر هستند و طول موجهای فروسرخ میانی که ده هزار نانومتر هستند. را در یک پلتفرم واحد قرار و ترکیب آنها به طور موثر است.

از طریق ایجاد پیکو حفره ها- حفرههای فوقالعاده کوچکی که نور را به دام میاندازند و توسط نقصهای تک اتمی روی وجوه فلزی تشکیل میشوند- محققان توانستند به حجم نور شدیدا محدودِ زیر یک نانومتر مکعب دست یابند. این بدان معنی بود که تیم می توانست نور MIR را تا اندازه یک مولکول محدود کند.

این پیشرفت توانایی عمیقتر کردن درک سیستمهای پیچیده را دارد و دریچه ای را به ارتعاشات مولکولی فعالِ مادون قرمز، که معمولاً در سطح تک مولکولی غیرقابل دسترسی هستند، می گشاید. اما MIRVAL می تواند در تعدادی از زمینه ها، فراتر از تحقیقات علمی محض هم مفید باشد.

دکتر چیکارادی نتیجه گیری می کند: MIRVAL می تواند چندین کاربرد مانند حسگری زمان واقعیِ گاز، تشخیص پزشکی، بررسی های نجومی و ارتباطات کوانتومی داشته باشد، زیرا اکنون می توانیم اثر انگشت ارتعاشی مولکول های منفرد را در فرکانس های MIR مشاهده کنیم. توانایی تشخیص MIR در دمای اتاق به این معنی است که کشف این کاربردها و انجام تحقیقات بیشتر در این زمینه بسیار ساده تر است.

با پیشرفتهای بیشتر، این روش جدید نه تنها میتواند راه خود را به سوی دستگاه های عملی که آینده فناوریهای MIR را شکل میدهند بیابد، بلکه توانایی دستکاری منسجم اتمهای «توپ با فنر» در سیستمهای کوانتومی مولکولی را نیز فراهم میکند.

منبع:

Making the invisible, visible: New method makes mid-infrared light detectable at room temperature

منبع فارسی: https://www.psi.ir/news2_fa.asp?id=3938

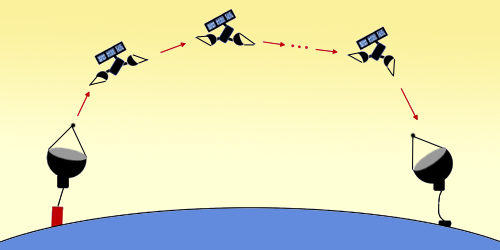

Global Quantum Communication via a Satellite Train

Global Quantum Communication via a Satellite Train

Long-distance quantum communication can be achieved by directly sending light through space using a train of orbiting satellites that function as optical lenses.

Transferring quantum information between widely separated locations is necessary to develop a global quantum network. This project is hindered by the high photon loss inherent to long-distance fiber-based transmission—the default for photonic qubits. To get around this problem, researchers have demonstrated the transmission of a quantum signal via satellite instead (see Viewpoint: Paving the Way for Satellite Quantum Communications). Now Sumit Goswami of the University of Calgary, Canada, and Sayandip Dhara of the University of Central Florida show how quantum information could be relayed over large distances by a network of such satellites [1].

The scheme involves a train of satellites in low-Earth orbit, each equipped with a pair of reflecting telescopes. A given satellite would receive a photonic qubit using one telescope and transmit the qubit onward using the other. The satellite train would effectively bend photons around Earth’s curvature while controlling photon loss due to beam divergence. The team likens the scheme to a set of lenses on an optical table.

Simulations of satellites 120 km apart with 60-cm-diameter telescopes showed that beam-divergence loss vanished. Over a distance of 20,000 km, total losses—primarily reflection loss but also alignment and focusing errors—could be reduced to orders of magnitude less than those of a few hundred kilometers of optical fiber. Ultrahigh-reflectivity telescope mirrors could further decrease this loss.

Goswami and Dhara examined two protocols based on such a setup. In one, two entangled photons are transmitted in opposite directions from a satellite-based source. In the other, qubits are transmitted unidirectionally, with source and detector both on the ground. Despite the effects of atmospheric turbulence, the latter performed well and has the advantage of keeping the necessary quantum hardware on Earth.

–Rachel Berkowitz

Rachel Berkowitz is a Corresponding Editor for Physics Magazine based in Vancouver, Canada.

References

- S. Goswami and S. Dhara, “Satellite-relayed global quantum communication without quantum memory,” Phys. Rev. Appl. 20, 024048 (2023).

Milestone for Optical-Lattice Quantum Computer

Milestone for Optical-Lattice Quantum Computer

Quantum mechanically entangled groups of eight and ten ultracold atoms provide a critical demonstration for optical-lattice-based quantum processing.

Ultracold atoms trapped in an optical lattice—a periodic array of laser-produced trapping sites—could potentially be used to perform quantum computations and should be scalable, according to experts. But until now researchers had failed to accomplish a critical step: quantum mechanically entangling more than two atoms at a time. Now Jian-Wei Pan of the University of Science and Technology of China and his colleagues have entangled one-dimensional chains of ten atoms and two-dimensional groups of eight atoms with high reliability [1]. The team has also demonstrated control and imaging of the states of the atoms with single-atom resolution [1]. The results show that several of the required building blocks needed for optical-lattice-based quantum processors are now practical.

Previously, Pan and his colleagues have entangled pairs of atoms in a system containing over 2000 rubidium atoms [2]. In that experiment they used a two-dimensional lattice that had two trapping locations at each lattice site—a so-called superlattice. To entangle larger numbers of atoms, the researchers again used the superlattice. They also used additional technologies: a so-called quantum gas microscope and three digital micromirror devices, spatial light modulators with high spatial precision. These tools provided the team with the single-atom resolution needed to create and then verify the simultaneous entanglement of groups of up to ten atoms within the set of 100 or so atoms involved in these experiments. Single-atom resolution will ultimately be necessary for quantum computers, so that devices can manipulate individual qubits and read their values.

–David Ehrenstein

David Ehrenstein is a Senior Editor for Physics Magazine.

References

- W.-Y. Zhang et al., “Scalable multipartite entanglement created by spin exchange in an optical lattice,” Phys. Rev. Lett. 131, 073401 (2023).

- H.-N. Dai et al., “Generation and detection of atomic spin entanglement in optical lattices,” Nat. Phys. 12, 783 (2016).